The solar opportunity for commercial properties has never been clearer. Energy costs remain high, grid uncertainty is a boardroom conversation, and the economics of on-site generation have shifted decisively in favour of businesses that act. For energy suppliers, this should be a straightforward sell.

And yet most solar sales programmes still run on the same blunt instrument: a customer list, a product offer, and a team making calls. Conversion rates are modest. Wasted effort is high. Good prospects get missed. Poor ones get called three times.

The problem isn't the product. It's the list.

The Data Problem — and Why It's Harder Than It Looks

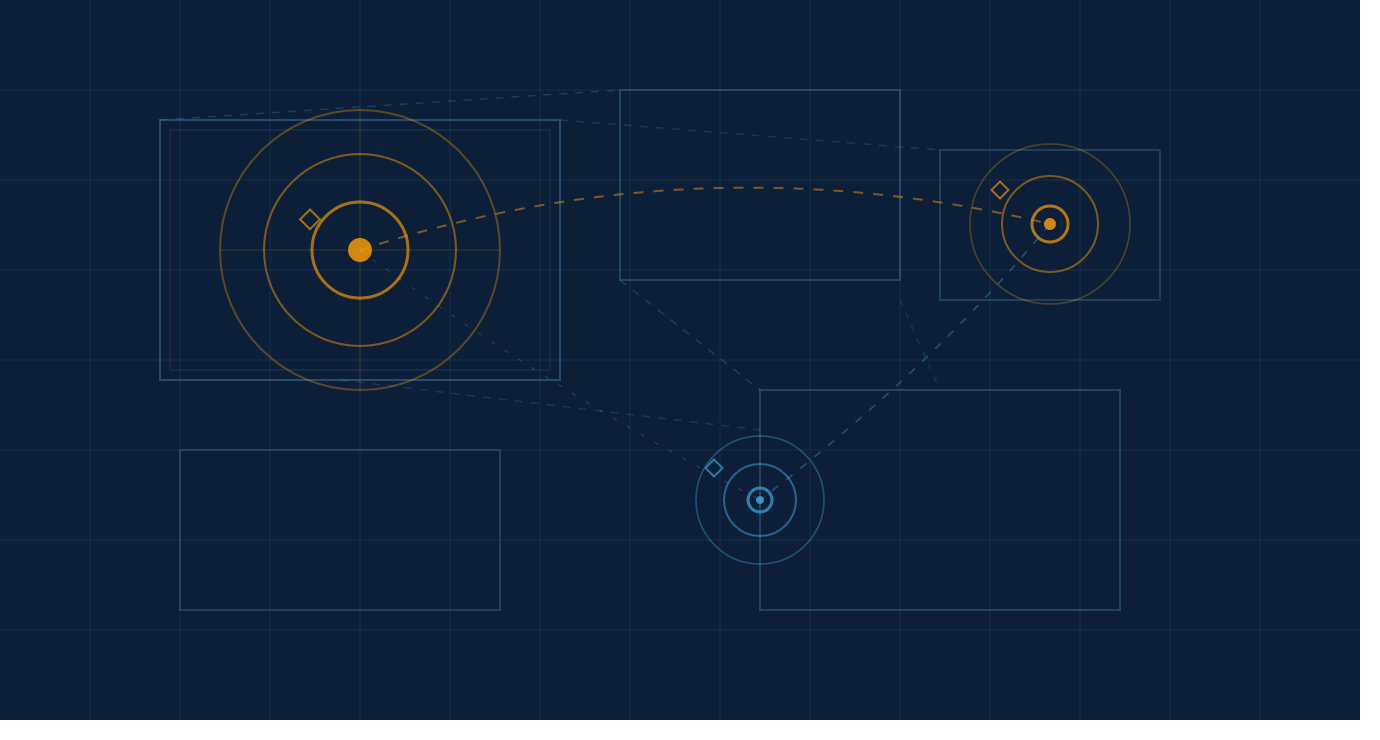

The information needed to build a genuinely predictive solar targeting model for commercial properties already exists. It's publicly available, it's comprehensive, and in most cases it's free. This is the point at which many people stop and assume the problem is solved — that you can point an AI tool at a few open datasets and have something useful in an afternoon.

You can't. And understanding why requires understanding what the data actually is, where it lives, and what it takes to turn it into something a sales team can act on.

The datasets you need span roof geometry derived from satellite imagery, energy intensity benchmarks by building type, flood risk classifications, planning records, EPC ratings, and rateable value data. Each has its own format, its own update frequency, and its own failure modes. EPC ratings for commercial buildings, for instance, are frequently incomplete, inconsistently recorded, and in many cases simply wrong — the result of variable survey quality across thousands of assessors over many years. Treating them as ground truth will corrupt your model. Knowing not to treat them as ground truth, and knowing what to use instead, is not something you learn from a dataset. It comes from time spent understanding how commercial buildings are actually assessed and how that data finds its way into public registers.

Knowing which sources to trust, which to triangulate, and which to handle with care — that's where the real work begins.

What You Actually Need to Know About Each Variable

Roof geometry and orientation. Satellite-derived building data can tell you roof area, pitch, and aspect. A large south-facing industrial roof is a fundamentally different proposition from a complex multi-level retail unit — different yield, different installation complexity, different payback period. Extracting that information cleanly from raw building data, at scale, across mixed-quality satellite coverage, is a data engineering problem. Knowing why it matters, and how to weight it correctly in a scoring model, is an energy problem. You need both.

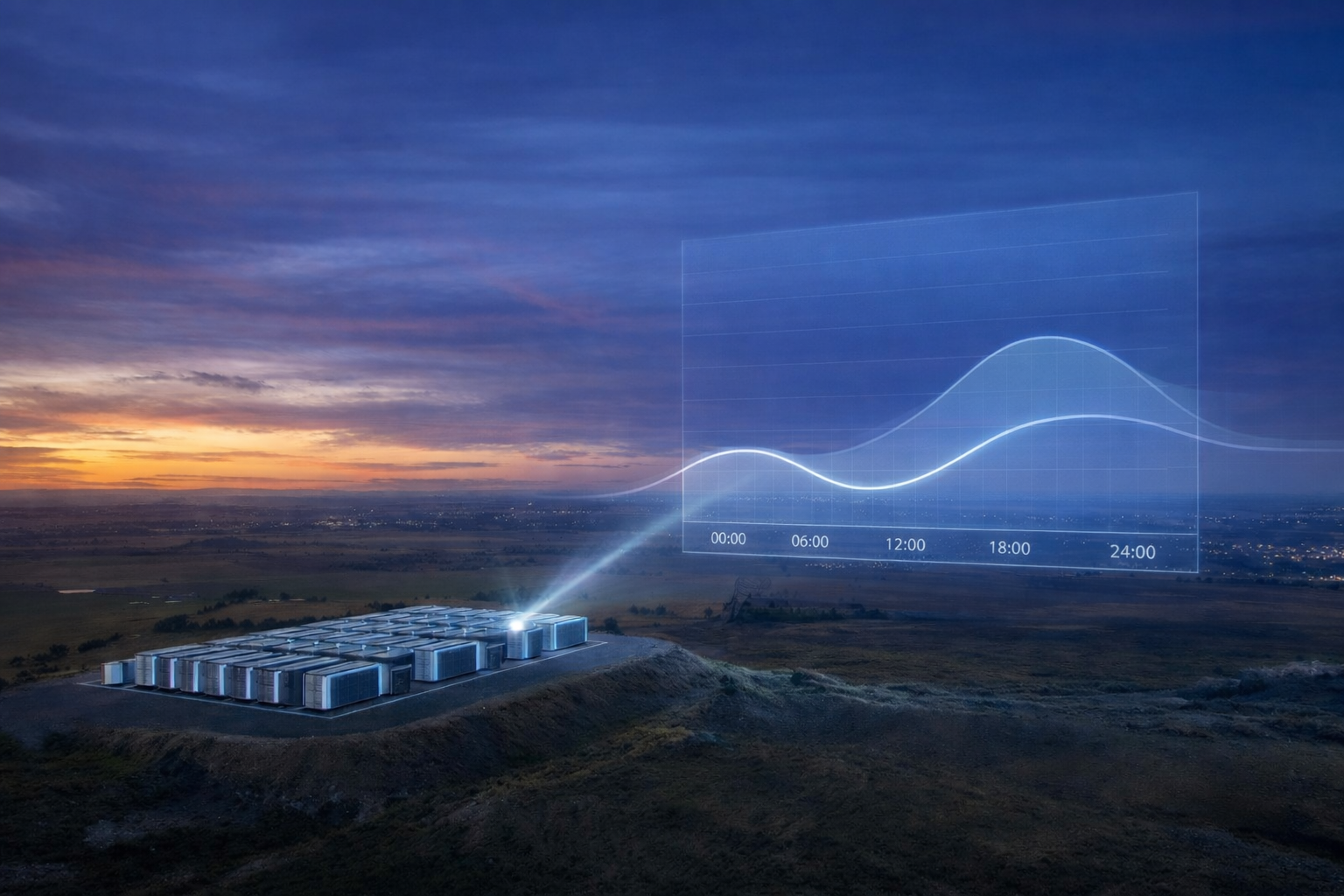

Energy consumption. This is the variable that most directly determines whether the economics of solar — and increasingly, solar paired with battery storage — actually work for a given business. Individual metered consumption data for private commercial properties isn't publicly available. But there are well-established ways to estimate it with meaningful accuracy from sources that are — combining building characteristics with sector-level benchmarks to produce a figure that's good enough to separate the businesses where the numbers work from those where they clearly don't. Knowing where that threshold sits, for different building types and different solar configurations, requires the kind of commercial modelling experience that goes well beyond knowing where the data is.

Flood risk. This variable is particularly important — and frequently overlooked — where battery storage forms part of the solution, which increasingly it does. The economics of time-of-use tariffs and grid export constraints mean that solar-plus-storage is becoming the default proposal for many commercial customers rather than the premium option. Battery installations require careful siting. High flood risk zones create real complications around placement, insurance, and in some cases planning consent — complications that can derail a project late in the sales process if they haven't been identified upfront. A business in a flood risk area isn't automatically a poor prospect. But it's a different conversation, requiring a different solution design, and sometimes a different commercial structure. Knowing that, and encoding it correctly in a scoring model, requires understanding how these projects are actually delivered on the ground — not just that flood risk data exists as a variable.

Planning history and constraints. Listed building status, conservation area designations, recent planning applications — all of these sit in local authority databases, updated inconsistently, formatted differently across hundreds of councils. Cross-referencing them against a commercial prospect list at scale is a non-trivial technical exercise. Knowing which constraints are disqualifying, which are manageable with the right approach, and which are irrelevant to a rooftop solar installation — that's sector knowledge. Miss this variable entirely and you'll be sending salespeople to buildings that were never going to get planning consent.

Why AI Doesn't Solve This on Its Own

This is the point worth being direct about. Applied AI has genuinely changed what's possible here — the tasks that used to take weeks of manual data engineering, entity matching across inconsistent address formats, probabilistic gap-filling in incomplete records, classification of building types from imagery, now compress significantly in the hands of a skilled team. The speed advantage is real and it's material. A working prototype that might once have taken months can now be built in a fraction of that time.

But the operative phrase is skilled team. AI accelerates execution. It does not replace the judgement that determines what to build, which variables to include, how to handle the data quality problems that surface at every stage, or what the output needs to look like to be usable by the people who will actually rely on it.

Someone unfamiliar with commercial energy markets who attempts this exercise using a general-purpose AI tool will run into difficulty at almost every step. They'll pull data that looks relevant but isn't reliable. They'll weight roof size too heavily because it's the most visible variable, without understanding that a large roof with the wrong pitch and aspect can still produce a marginal or unviable case. They'll produce an output that a data scientist can interpret but a sales manager can't use. And they won't know, at any of those points, that something has gone wrong — because the model will still produce numbers, the numbers will still rank the prospects, and the list will look plausible right up until the conversion rate tells a different story.

The value of this kind of work isn't access to the tools or even access to the data. It's the layer of domain knowledge and engineering rigor that sits underneath — the part that determines whether the output is genuinely predictive or just confidently wrong.

Why Explainability is Non-Negotiable

There's a version of this that stops at the score — a ranked list with a number next to each name, no further explanation. That version is useless in a B2B sales context, and building it that way is a mistake that comes from thinking about the problem as a data science exercise rather than a commercial one.

A commercial sales conversation is not a consumer transaction. The person on the other end of the call is likely to ask why their business has been contacted, what the assessment is based on, and why it's relevant to them specifically. A salesperson who can answer that — who can say that the building's characteristics suggest energy costs that make the economics favorable, that the site geometry looks well-suited, that a combined solar and storage solution may be worth exploring given what the data shows — is not selling. They're advising. That's a fundamentally different dynamic, and it produces better outcomes.

Building a model that generates that kind of explainable output requires understanding what a sales conversation actually looks like, what objections arise and when, and what information creates confidence rather than confusion. That understanding doesn't come from the data.

What Better Targeting Actually Means Commercially

The numbers are straightforward, even if the specifics vary.

A commercial solar sales program operating at a 3% conversion rate on a list of 50,000 businesses produces 1,500 customers. The same program operating at 6% — achievable with genuinely better targeting — produces 3,000, from the same team, the same budget, and the same number of calls. The cost of building the model is recovered many times over in the first cohort alone.

Beyond volume, better targeting changes the shape of the pipeline. Fewer wasted calls means lower cost of sale. Higher quality leads mean shorter sales cycles. Salespeople who are consistently calling businesses that are genuinely good fits for the product develop confidence and cadence. These effects compound.

There is also a less obvious benefit: the businesses that don't make the list. Calling a poor-fit prospect doesn't just waste resource — it creates a negative brand impression, and in a B2B context where word travels within sectors and supply chains, that impression spreads. Knowing who not to call has real value. That judgement, too, is encoded in the model — but only if the people who built it understood the commercial consequences of getting it wrong.

If any of this resonates with a challenge you're sitting on, we'd be glad to talk it through.